My Biggest Common Lisp Project

First published March 8, 2008 on blogspot.com.

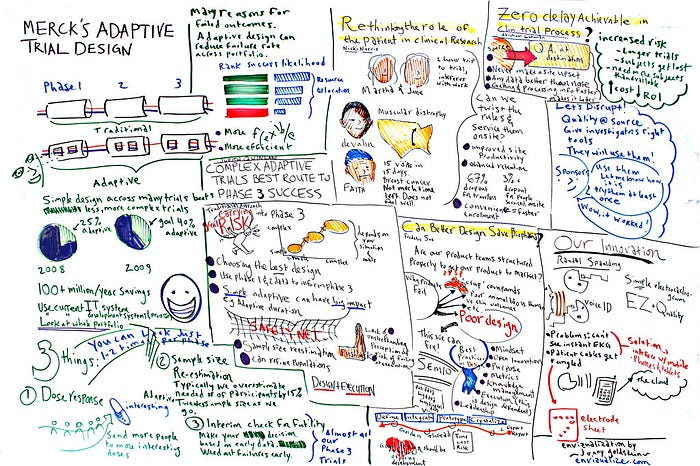

Someone asked how much Lisp I have really done. I am building a resume these days getting ready to look for some Lisp work, so I thought I would kill two birds with one stone and write up my experience as the architect and lead developer (out of two, for the most part) of a clinical drug trial management system.

Over a couple of years we built a system from eighty thousand lines of Lisp, throwing away fifty thousand more along the way. We were in the classic situation described by Paul Graham in On Lisp: not knowing exactly what program we were writing when we set out on our mission. We used C libraries for writing and reading 2D barcodes; forms scanning; character recognition; generating TIFFs, and probably a couple I am forgetting.

The application was a nasty one:

- capture clinical drug trial patient data as it was generated on paper at the participating physician’s site, using scanner and handwriting recognition tools;

- validate as much as possible with arbitrary edits; such as complex cross-edits against other data;

- allow a distributed workgroup to monitor sites and correct high-order mistakes;

- track all changes and corrections; and

- do everything with an impeccable audit trail, because the FDA has very strict requirements along these lines.

Making things worse, doctors are often as sloppy about trial details as the FDA is strict about having the rules followed. But drug companies cannot run trials themselves because the FDA demands that investigators be independent to avoid conflict of interest. Getting compliance is tough, and that is the opportunity we were targeting — better compliance through automation at a granular, near real-time level sufficient to give drug companies effective oversight over investigator performance.

Big Bucks

The stakes are tremendous. Blockbuster drugs can earn millions of dollars a day but only while under patent protection. Unfortunately, patents must be acquired at the start of the trial process, which can run for many years. A third or more of the revenue-rich patent life is spent just getting to market. Big snafus in trials can force months of delay with an opportunity cost of millions a day.

When I got the call from my good friend who was the angel and visionary on this project, he was two years in with not a lot to show for it and his top developer had just given notice. I went in to hear what they were up to, and to do an exit interview with the dearly departing.

The business plan was to score big by handling hundreds of trials a year. This would be especially tough because every trial was different. Each involved a custom set of forms to be collected over a series of patient exams. These forms varied from exam to exam. Business logic drove validation of the forms and how the trial was to run, and varied from trial to trial as dictated by what is known as a trial protocol.

When I heard all this I knew we would have to find a solution that did not involve custom programming for each trial. The application would have to be configurable without programming, by a power user trained in the software. If Lisp is the programmable programming language, we needed a programmable application.

“We wrote this and cannot scale it. Maybe you can.”

Later I learned that competitors in our space had half our functionality and could not handle more than fifteen trials a year and were not profitable. They attempted what they called a “technology transfer” to the drug companies, translating as “we cannot scale this approach but maybe you can”. Hmm.

The departing guru showed me what they had so far, which was a system built in Visual Basic with an SQL database. First came the demo of the interactive module, a pure mockup with no substance behind it. Then he showed me the scanning and forms recognition tools in action. He printed out a form built using Word or Visio, scanned it back in, then opened the JPEG file in a manual training tool that came with the recognition software. Field by field he painfully taught the software where to find each field, what its name should be, and whether it was numeric, or alpha, yadda yadda.

Ouch. The process was slow and created a brittle bridge from form to application. Worse, these forms might be redesigned at any time leading up to trial commencement in response to concerns from external trial review panels. They might also change during a trial in response to field experience. So at any given time, multiple versions of the same form could be in existence (trials at different sites do not start and stop together). Not only would developers be forever retraining the recognition and then modifying the software to know about new or changed fields, but they also would have to keep alive all the multiple versions of form definitions and match them to specific forms as they got scanned back in, or opened for editing.

I was already thinking about automating everything and this process was one that had to be automated. I asked the departing guru if the forms recognition software could be trained via an API instead of via the manual utility program he was using. He looked at me for a long moment then, said, “Yes”, realizing I think that that is what they should have done. I myself realized he was leaving in part because he was a systems guy at heart and this was one deadly application to undertake.

To my friend’s relief I agreed to give his vision a try. Now it was my turn, but I did not consider for a moment switching to Lisp. This was a serious business application and I knew my friend would never go for it. Nowadays with more experience that is exactly where I would have begun, back then I did not even consider fighting that fight.

No problem. I had pulled off table-driven designs in the past using COBOL and the table-driven design was going to be the key to our success, not the language. Making the table thing work also meant we would want a custom language so we could express the table data easily.

Back home, I looked up at my bookshelf for the manuals to various Lex/Yacc tools I had bought over the years. But I did not look for very long. I knew I could at least use Lisp to quickly prototype the language using a few clever macros, while Lex/Yacc were known to be bears to use. So Lisp it would be, just to prototype the trial specification language.

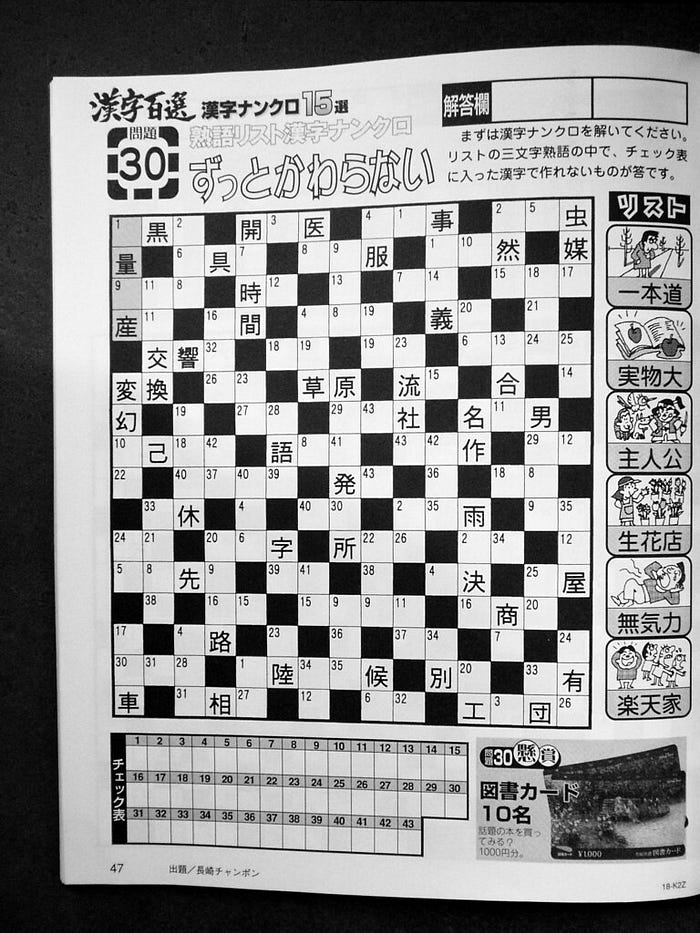

Then I noticed something, my latest hobby creation using Lisp and my cherished Cells hack: an interactive version of the New York Times Double-Acrostic puzzle. It had individual boxes for each letter like any good crossword and that is what we needed for the patient data! So I was halfway home, and sure enough after a few hours I was looking at a perfect mockup of the first page of the sample clinical trial we were using to build the software. And my mouth dropped open at what I saw.

A blinking cursor. In the first character box on the page. I saw that and knew we would be using Lisp for the project. Hunh?

Well, we had to print these forms out, train the forms recognition software, scan the forms back in, feed the scan image to the trained software, and then — wait for it — let the users make corrections to the data. And there it was, the blinking cursor waiting for my input. I had been so concerned with getting the form laid out and ready for printing that I had forgotten that I had cannibalized the code from the interactive crossword puzzle software.

We’re done! How famous are those last words? Anyway, my next step was to make sure this wonderful stuff running on my Macintosh under Digitool MCL would run on Windows NT, the planned deployment platform. I figured Lisp I could sell, but the Mac? In 1998? Not.

So on my own dime I acquired a copy of AllegroCL and spent a few weeks porting everything to Windows and it was more of an OS port because Common Lisp is an ANSI standard with which vendors comply very well. (Thanks, Paul Dietz!) When I saw everything working on Windows I called my friend, took a deep breath, and said I wanted to use Lisp.

“OK,” he said.

“And an OODB,” I said.

“OK.”

Some fight. The OODB was AllegroStore, then the vendor’s solution for persistent CLOS. (Now they push AllegroCache, a native solution, where AllegroStore was built atop the C++ OODB ObjectStore, and a graph DB, AllegroGraph.)

Why the OODB? I had noticed something while playing with the database design for the system: I missed objects. I had done some intense relational design back in the day and enjoyed it but by the time in question I had also done a ton of OO and I missed it in my schema design. Not only would AllegroStore give me OO in my DB, it would do so in the form of persistent CLOS, meaning my persistent data would be very much like all my other application data. The whole problem of mapping from runtime data structures to the database — something programmers have long taken for granted — would go away.

About a year later we had the whole thing working and I was about to take a working vacation traveling first to San Francisco for LUGM ’99, the annual Lisp meeting (here is the best talk) and then on to Taiwan for two weeks of work and socializing with friends. I decided to use this time to tackle a performance problem that had reached “The Point of Being Dealt With”. The solution I had in mind seemed like it would be fun (more famous last words) and nicely self-contained and not too onerous for a working holiday.

Basically my approach to performance is not to worry about it until I have introduced enough nonsense that the application is running slowly enough to hurt my productivity. Then it is time for what I call Speed Week, a true break from new development dedicated purely to making the system hum again. And we had reached that point.

Otherwise the application was wonderful, just as planned. A trial form was specified using defform, defpage, defpanel, deffield, etc. From that single specification came beautifully typeset paper forms, the corresponding DB schema, unrestricted business logic, automatic scanner training, scanning, recognition, and screen forms for on-line editing of the scanned data.

Life was good, but run-time instantiation of a form was a pig. It occurred to me that each time a page was instantiated the exact same layout rules were being invoked to calculate where all the text and input fields should fall — coming up with the exact same numbers. The forms author merely specified the content and simple layout such as rows and columns and Cell rules used font metrics to decide how big things were and then how to arrange them neatly. The author simply eyeballed the result and decided how many panels (semantically related groups of fields) would fit on a page. So, coming straight from the source, there was a load of working being done coming up with exactly the same values each time. Timing runs confirmed this was where I had my performance problem.

What to do? Sure, we could (and did!) memoize the results and then reuse the values when the same page was loaded a second time, or we could… and then my mouth dropped open again. The alternative (described soon) would let me handle the six hundred pound gorilla I have not mentioned.

But first I have to tell you about an overarching problem. One prime directive was that trial sites be fully functional even if they were off the network. Thin-client solutions need not apply. So we had to get client sites set up with information specific to all and only those trials they would be doing. And keep that information current as specifications changed during a trial. My six hundred pound gorilla was version control of the software and forms.

Now for the alternative. What if we instantiate a form in memory, let the cells compute the layout, and then traverse the form writing out a persistent mirror image of what we find, including computed layout coordinates? Business logic can be written out symbolically and read back in because, thanks to Dr. McCarthy code is data.

We avoid the redundant computations, but more importantly we now had a changed form specification as a second set of data instead of as a software release. Work on the original performance problem had serendipitously dispatched Kong, because now the replication scheme we would be doing anyway would be moving not just trial data in from the clinical sites but also the configuration data from the drug companies out to the sites. We’re done!

If it works. The test of the concept was simple. I designed one of the forms using the trial specification DSL (the macros), compiled, loaded, instantiated, displayed, printed it, did some interactive data entry. Cool, it all works.

Then I “compiled” the form as described above, writing everything out to the OODB. Then I ended my Lisp session to erase any knowledge of the original form source specification.

Now I start a new Lisp session and do not load the source code specifying the form. Using a second utility to read the form information back in from the database, I instantiate the form in memory. And it works the same as I had with the one instantiated from the original specifications. Life was good and about to get better.

Round about now we had the whole thing working, by which I mean all the hard or interesting pieces had been solved and seen to run from form design to printing to capture and validation. Work remained, such as the partial replication hack, but the DB design had taken this requirement into account and was set up nicely to support such a beast. That in turn would let us toss off the workgroup requirement by which remote trial monitors hired by drug companies could keep an eye on the doctors. But then came a four-day Fourth of July and I decided to treat myself to some fun.

For eighteen months we had been working with exactly one specific form from the sample trial. It occurred to me to test how powerful was my DSL, and actually build out the remaining forms of the eighteen visit trial. The experiment would be compromised two ways.

First, I would be the power user. Talk about cheating. But we understood the friendliness of the specification language would have to be developed over time as folks other than me came to grips with it, and also that those power users would have push-button access to software experts when they got stuck — we were developing an in-house application, not a presentation authoring tool for the general public.

The second compromise went the other way, making things harder. As I proceeded through the new forms I would be encountering specific layout requirements for the first time, and writing new implementation code as much as I would be simply designing the forms in power user mode. Regarding this, I imposed a constraint on myself: I would design the forms to match exactly the forms as they had been designed in Word, even though in reality one normally takes shortcuts and tells the user “you know, if you laid it out this way (which actually looks better) we would not have to change the layout code”. But I wanted to put the principle to test that we had developed a general-purpose forms design language.

What happened? It was a four day weekend and I worked hard all four days but the weather was great and I enjoyed good dose of Central Park each day skating. On the development side, as predicted, I spent at least half my time extending the framework to handle new layout requirements presented by different forms. But by the end of the weekend I was done with the entire trial. Yippee.

I spent a day writing out the pseudo-code for the partial replication scheme and the other guy started on that while I started thinking about the workgroup aspect. It occurred to me that the mechanism for storing forms describing patient visits could be used for any coherent set of trial information, such as a monitor’s so-called “data query” in which they did a sanity check on something that had passed validation but still looked wrong. The only difference with these forms compared to the trial data forms (already working) would be that they would not be printed and scanned, so… we’re done!

Talk about code re-use. Somewhere along the way I had accidentally created a 4GL in which one simply designed a screen form and went to work, the database work all done for you.

Now I started working on the interface that would knit all this together. GUIs are insanely easy with my Cells hack. Downright fun, in fact. So much fun, so easy to do, and then so foolproof that I missed them almost immediately. The persistent CLOS database lacked Cells technology. Of course. It was just persistent data as stored by the AllegroStore ODB.

But Cells at the time was implemented as a CLOS metaclass, and so was AllegroStore… no, you are kidding… multiple-inheritance at the metaclass level?! That and a little glue and… we’re done! The GUI code now simply read the database and showed what it found to the user. When anything changed in the database, the display was updated automatically. (I always enjoy so much seeking out the Windows “Refresh view” menu item. Not!)

For example, the status of form Visit #1 for Patient XYZ might be “Printed”. Or — since one business rule said forms should be printed and scanned in short order so the system could tell when forms had been lost — it might say “Overdue for Scanning”. So now the user looks around, finds the form, puts it in the sheet feeder of the scanner and hits the scan button. In a moment the user sees the status change to “Ready for Review”.

The beauty of having wired up the database with Cells is that the scanning/recognizing logic does not need to know that the user is staring at an “Overdue” indicator on the screen that no longer applies. Tech support will not be fielding calls from confused users saying “I scanned it six times and it still says overdue!” The scanning logic simply does its job and writes to the database. The Cells wiring notices that certain GUI elements had been looking for this specific information and automatically triggers a refresh. And as always with Cells, the GUI programmer did not write any special code to make this happen, they simply read that specific bit of the database. Cells machinery transparently recorded the dependency just as it automatically propagates any change.

In the end we had taught Cells and the ODB a few kinds of tricks. It was possible for a dynamic slot of a (dynamic) GUI instance to depend on the persistent slot of a DB instance, or on the population of a persistent class (“oh, look, a new data query just came in”). Persistent instances can have dynamic slots in AllegroStore and these could depend on persistent slots, and perhaps the scariest bit: persistent slots could depend on other persistent data. The database was alive!

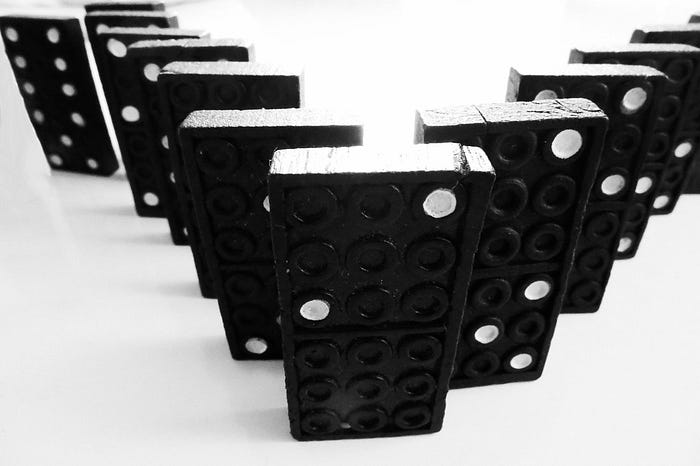

One change could ripple out to cause other change in the database. For example, “Overdue Form” was a persistent attribute calculated from the fact that a form had been printed but that it had not been scanned. If that status held for a day the database automatically grew a new persistent instance, an “Alert” instance visible to trial monitors who could intervene to see why the clinical site was not taking care of business. When the form got scanned, Cells logic caused the status to move up to “Scanned/ready for review” and the “Alert” instance got deleted. All in classic Cells declarative fashion: “an Alert exists if a document is overdue for one day” takes care of both creating and destroying the instance.

I’ll never forget a religious moment I had scanning forms so I could work on the interface’s mechanism for correcting scanner or recognition errors. When I called up page two of some form all the fields were blank. I had never in hundreds of scans seen the scanner/recognition software completely miss a page, but a peek at the log file showed the page had indeed gone unrecognized.

Stunned at this first ever failure I just grabbed the second page, put it back in the sheet feeder and hit the scan button.

Talk about U/X. We used 2-D barcodes to identify pages. The nice thing about the barcodes is that I (or a doctor’s assistant) could just do that. We did not have to tell the software “OK, now I am scanning page two of form 1 for patient XYZ.” The barcode data was in fact nothing but the GUID we assigned to the page when creating it in the ODB, so the printed paper had object identity. :)

The other neat thing here is that, when instantiating a page, we linked it to the version of the form template from which it was derived. This took care of the version control problem created by changing forms — a scanned page was able to look in the database to find the template from which it was created and scan and open itself. Users never had to say what page they were dropping into the sheet feeder, or worry about the order. Back to my missing page…

The scanner started whirring, the page went through, and log diagnostics from the recognition process started zooming by (OK, this time we recognized the page, the last time was truly a fluke) and then even before it happened I realized (Omigod!) what was about to happen.

I turned my eyes to the blank page still up on my screen and waited…Boom! The data appeared.

Having wired the database to the screen, what happened was this: the recognition logic simply read the forms and wrote out the results as usual, updating each persistent DB form field with a value. The screen field widget had gotten its display value by reading that DB field. Cells reactively told the screen field widget to “calculate” again its display value. It was different, so the Cell observer for the screen field generated an OS update event for the field. The application redrew the field in response to the update event.

That was so cool to see happen.

We’re done! Literally. Eventually our tiny little operation was never able to persuade big pharma we could handle the grave responsibility of not screwing up trials, even though IBM itself loved our work and worked with us to pitch it to pharma. But that is another story.